Artificial intelligence is everywhere.

From generative chatbots to predictive healthcare systems, AI appears unstoppable. Companies invest billions. Governments race for dominance. Headlines promise transformation across every industry.

However, beneath the excitement lies a quieter reality.

Artificial intelligence has limits—technical, economic, ethical, and structural—that are rarely discussed with the same enthusiasm as breakthroughs.

Understanding these limits is not pessimism. It is strategic clarity.

Because while AI is powerful, it is not omnipotent.

1. AI Does Not Truly “Understand”

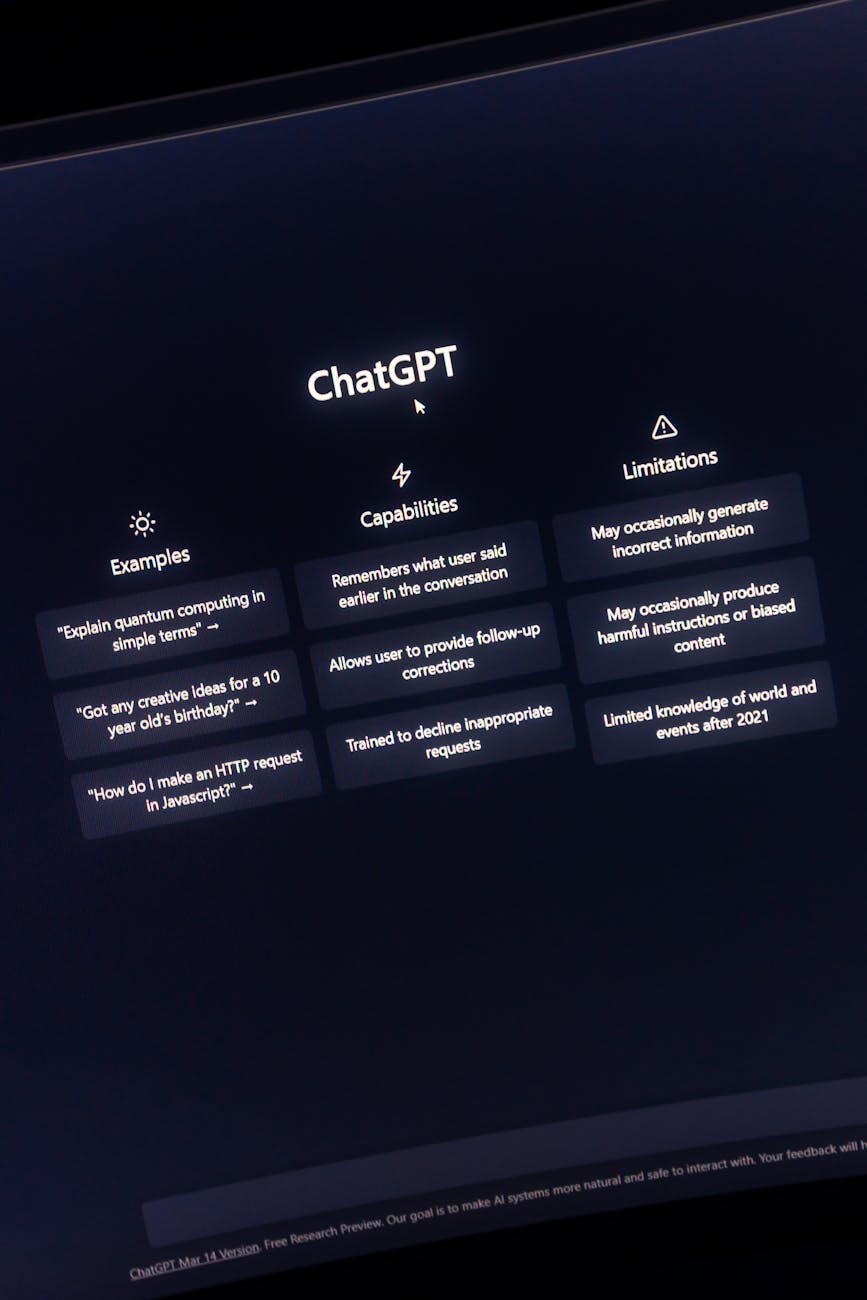

Modern AI systems like OpenAI’s GPT models and Google DeepMind’s advanced architectures can generate human-like text, solve complex tasks, and even pass professional exams.

Yet fundamentally, they do not understand meaning.

They detect patterns in massive datasets. They predict likely outputs based on probabilities. However, they lack consciousness, self-awareness, and contextual grounding in the human sense.

This limitation becomes visible when AI systems:

- Misinterpret ambiguous instructions

- Fail in unfamiliar edge cases

- Confidently provide incorrect information

As explored in AI Is Becoming a Powerful Cybersecurity Weapon, AI enhances defence systems. However, without human oversight, pattern-based intelligence can produce unpredictable blind spots.

In short: AI simulates understanding—it does not experience it.

2. Hallucinations Remain a Structural Problem

One of the most under-discussed weaknesses of large language models is hallucination—the generation of false but plausible information.

Even advanced systems developed by Microsoft and Meta struggle with this issue.

Why does it happen?

Because AI models optimise for linguistic coherence rather than factual accuracy. If statistical probability suggests a response “sounds right,” the system may produce it—even if it is wrong.

This limitation creates significant challenges in:

- Legal research

- Medical guidance

- Financial analysis

- Academic citation

Consequently, AI cannot yet operate autonomously in high-stakes environments without layered verification systems.

3. AI Is Only as Good as Its Training Data

Artificial intelligence systems learn from historical data.

However, historical data contains bias, inaccuracies, and structural inequalities.

For example, facial recognition systems studied by MIT researchers have demonstrated performance disparities across demographic groups.

Similarly, predictive policing tools and credit scoring algorithms have raised concerns about fairness.

This reveals a deeper limitation:

AI reflects the world it is trained on.

If the data is flawed, incomplete, or biased, the model will inherit those limitations—often at scale.

Therefore, “algorithmic objectivity” is largely a myth.

4. Energy Consumption and Environmental Cost

AI innovation requires immense computational power.

Training large models involves thousands of GPUs running for weeks or months. Data centres operated by companies like Amazon and Google consume vast amounts of electricity.

As AI adoption grows, so does its environmental footprint.

This introduces a paradox:

AI is often promoted as a solution to climate modelling and energy optimization. Yet its own infrastructure demands substantial energy resources.

Unless hardware efficiency and renewable integration improve significantly, AI scalability may face environmental constraints.

5. General Intelligence Remains Elusive

Despite rapid progress, artificial general intelligence (AGI)—machines capable of broad human-like reasoning—remains theoretical.

Today’s systems excel in narrow domains:

- Image recognition

- Natural language generation

- Game strategy

- Pattern detection

However, they struggle with:

- Long-term reasoning

- Abstract causal inference

- Transfer learning across unrelated domains

Even powerful systems such as IBM’s Watson illustrate how specialised intelligence does not equate to universal cognition.

As discussed in How Humans Will Interact With Machines Next, AI may become more integrated into daily workflows. Nevertheless, integration does not imply equivalence with human intelligence.

The gap between narrow AI and general intelligence remains substantial.

6. Security Vulnerabilities and Adversarial Attacks

Ironically, AI systems themselves can be exploited.

Researchers have demonstrated that slight input manipulations—known as adversarial attacks—can trick AI models into misclassifying images or misinterpreting commands.

In cybersecurity contexts, this creates high-risk scenarios.

As outlined in Ransomware Attacks Are Evolving — Here’s How, threat actors increasingly use AI to automate attacks. Simultaneously, AI-driven defence systems can be manipulated through data poisoning or model exploitation.

Therefore, AI is both a tool and a target.

Its robustness remains an ongoing challenge.

7. Legal and Regulatory Uncertainty

AI innovation often outpaces regulation.

Governments worldwide are attempting to develop governance frameworks. The European Union has introduced comprehensive AI regulations, while policymakers in the United States debate oversight models.

However, regulatory clarity is still evolving.

Key unresolved issues include:

- Liability for AI-generated errors

- Intellectual property ownership

- Cross-border data governance

- Accountability in autonomous decision-making

Until regulatory frameworks stabilise, large-scale AI deployment carries legal uncertainty.

8. Economic Displacement vs. Productivity Gains

AI increases efficiency.

Automation reduces manual workloads. Decision-support systems improve accuracy. Generative tools accelerate content production.

However, productivity gains may coincide with labour displacement.

Historically, technological revolutions—from industrial machinery to cloud computing—have disrupted job markets before creating new roles.

The difference with AI is speed.

If workforce adaptation lags behind automation deployment, economic inequality may widen.

Thus, AI’s limitation is not purely technical—it is socioeconomic.

9. Overreliance and Cognitive Atrophy

Another rarely discussed concern is human dependency.

As AI systems handle writing, coding, navigation, and decision support, users may gradually outsource cognitive effort.

While assistance enhances productivity, overreliance may weaken critical thinking, memory retention, and problem-solving skills.

In effect, the limitation may not be AI’s capability—but humanity’s adaptation to it.

10. AI Cannot Replace Human Judgment in Complex Moral Contexts

Finally, artificial intelligence lacks moral agency.

It does not possess values, empathy, or lived experience.

Although reinforcement learning techniques attempt to align AI with human preferences, ethical nuance remains deeply human.

For example:

- End-of-life medical decisions

- Judicial sentencing

- Military engagement authorization

- Crisis negotiation

In these contexts, AI may assist—but cannot replace—human responsibility. Read More

Leave a Reply